AI Deepfake Sextortion: When Scammers Create Fake Explicit Images of You

AI deepfake sextortion creates fake explicit images from innocent social media photos without victim's cooperation. Scammers use open-source AI tools to doctor LinkedIn headshots, corporate photos, and Facebook images into fabricated explicit content. Blackmail demands target professionals with threats to send images to employers, spouses, or professional licensing boards.

Federal and state extortion laws prosecute AI deepfake blackmail regardless of image authenticity. Victims face career destruction and marriage damage even when completely innocent, requiring immediate attorney representation to protect their reputation.

What Is AI Deepfake Sextortion?

AI deepfake sextortion uses artificial intelligence to create fake explicit images from innocent social media photos, then demands payment to prevent distribution to employers, family, or professional contacts.

Scammers pull benign photos from LinkedIn, Facebook, and Instagram. AI image-generation tools create fake explicit content in minutes. No victim cooperation is needed.

The blackmailer demands $500-$5,000 with threats to expose images. The FBI reports rapid increases in AI-powered blackmail schemes targeting professionals.

Victims are completely innocent but face real reputation threats. You never sent intimate images. You never engaged in risky online behavior. The scammer simply found your professional headshot and fabricated everything.

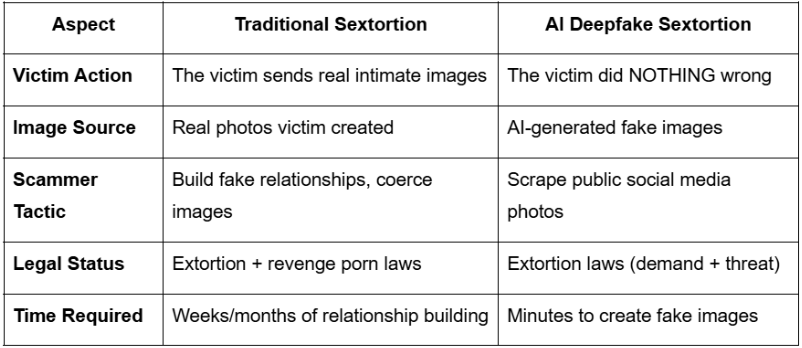

This differs fundamentally from traditional sextortion, where victims were coerced into sending real intimate images. AI technology eliminated that requirement.

How AI Tools Create Fake Images From LinkedIn Photos

Open-source AI image generators transform professional headshots into explicit deepfake content within minutes using face-swapping algorithms.

Scammers scrape LinkedIn, company websites, and professional directories. They upload your corporate headshot to AI tools. The algorithm face-swaps your image onto explicit body templates.

The output looks realistic to most viewers. The entire process takes 2-5 minutes per image.

The technology is extremely powerful, user-friendly, and widely available. No technical skill is required. Anyone can access these tools.

Your innocent LinkedIn photo becomes their weapon. Your company website headshot becomes their source material. Your Facebook profile picture becomes their leverage.

Traditional Sextortion vs. AI Deepfake Sextortion

Understanding what to do if someone blackmails you requires distinguishing AI-generated threats from traditional sextortion schemes.

Why Professionals and Executives Are Prime Targets

Scammers target employed professionals with LinkedIn profiles, corporate headshots, and high financial capacity through systematic photo scraping operations.

LinkedIn provides high-quality professional headshots. Scammers assume employed professionals have $3,500-$10,000 disposable income.

Married professionals face dual threats. Career destruction plus marriage damage creates maximum pressure to pay.

License-dependent professionals are highly vulnerable. Doctors, lawyers, and CPAs face professional board exposure. Public-facing roles like salespeople, consultants, and executives are easy to research.

Corporate websites list employee names, titles, and contact information. Everything scammers need is publicly available.

Professionals facing escort blackmail or AI deepfake threats share similar reputation protection needs.

How Scammers Scrape LinkedIn for Target Photos

Automated bots scrape thousands of LinkedIn profile photos daily, prioritizing executives, licensed professionals, and public-facing employees.

Bots crawl LinkedIn by industry. Finance, healthcare, and legal sectors are top targets.

Target selection criteria include job title, company size, and location. Scammers download profile photos plus employment information. They cross-reference with Facebook and company websites for additional images.

The system builds target databases with contact information. Automated AI generation creates fake images at scale. Mass blackmail campaigns reach hundreds simultaneously.

Your LinkedIn headshot becomes their source material. Your professional brand becomes their targeting criteria.

Why Executives and Licensed Professionals Pay Most

Scammers demand higher payments from professionals whose careers depend on reputation and licensing board approval.

Doctors face medical board exposure. License suspension follows ethics complaints.

Lawyers face bar association complaints. Disbarment risk forces immediate action.

CPAs face ethics violations. Certification revocation destroys careers.

Executives face HR notification. Termination follows investigations.

Married professionals face spouse notification. Divorce proceedings destroy families.

Higher stakes drive higher ransom demands. Scammers target $2,000-$10,000 from license-dependent professionals.

Is AI Sextortion Illegal? Yes—Even When Images Are Fake

Federal and state extortion laws prosecute AI deepfake blackmail regardless of image authenticity because the crime is the threat combined with a demand, not the image source.

The criminal element is simple. Threat plus demand for value equals extortion.

Image authenticity is legally irrelevant. Victim innocence doesn't eliminate criminal conduct.

You're innocent. The scammer is still committing a federal crime.

Prosecutors don't require real images for charges. The fabricated nature of AI-generated content doesn't reduce criminal liability.

Laws addressing deepfake-specific crimes lag behind technology. Traditional extortion statutes still apply fully.

Like emotional vs. criminal blackmail, AI sextortion crosses into criminal territory when threats demand payment.

Federal Law: 18 U.S.C. § 875 Applies to AI Threats

Federal law 18 U.S.C. § 875 criminalizes interstate threats transmitted via email, text, or social media demanding money or services.

The statute covers interstate communication with extortion demands. Penalties reach up to 20 years of federal prison.

The law applies when an email or text crosses state lines. Image authenticity is NOT required for prosecution.

The FBI investigates AI deepfake blackmail under this statute. Prosecution is possible even if the victims don't pay.

Scammers face federal charges regardless of whether images are real or AI-generated. The threat itself constitutes the crime.

Ohio and State Laws Prosecute Regardless of Image Source

Ohio Revised Code § 2905.11 and similar state extortion statutes criminalize threats to expose information regardless of whether that information is fabricated.

Ohio R.C. § 2905.11 defines extortion as a third-degree felony. Penalties include 9-36 months imprisonment.

California Penal Code § 518 prosecutes extortion via threats. Texas Penal Code § 31.03 criminalizes theft by extortion.

State prosecutors recognize that demand plus threat equals completed crime. Fabricated images still constitute a "threat to expose."

Multi-state cases require attorney coordination. Different jurisdictions apply different statutes to the same conduct.

Why Innocent Professionals Still Need Attorney Representation

Professionals targeted by AI deepfake blackmail require immediate attorney representation because reputation damage occurs before anyone verifies image authenticity.

The timeline creates the problem. Employers see images BEFORE forensic analysis. Spouses react BEFORE explanations.

First impressions become lasting impressions. You know images are fake. Your employer reacts emotionally anyway.

HR departments investigate before asking questions. Spouses demand explanations before listening. Professional licensing boards open inquiries immediately.

You're innocent. You still need legal protection.

The Anti-Extortion Protocol provides confidential legal representation protecting professional careers while addressing AI deepfake threats.

Attorney-client privilege protects confidentiality. Legal representation prevents premature disclosures. Evidence preservation supports potential prosecution. Coordinated response prevents escalation.

Employer and Spouse Reactions Happen Before Verification

HR departments and spouses react emotionally to explicit images before forensic experts verify AI fabrication, creating immediate career and marriage damage.

The HR notification timeline moves fast. Images arrive. Investigations open. You're contacted.

Damage occurs before you explain, "images are AI-generated." Spouses see explicit images. Emotional reactions destroy trust.

Explaining "it's deepfake AI" sounds like an excuse. Professional networks see images. Reputation harm is immediate.

Attorney representation manages the disclosure timeline and messaging. Professionals report HR placing them on administrative leave pending investigation. This happens even after explaining that images are fabricated.

Understanding how to collect evidence safely protects your legal case while preserving professional licenses.

Professional License Board Exposure Risks

State licensing boards for doctors, lawyers, and CPAs open ethics investigations when explicit images surface, regardless of authenticity, requiring immediate attorney defense.

Medical boards investigate "conduct unbecoming" complaints. Bar associations file ethics complaints. CPA licensing conducts professional standards reviews.

The burden of proof falls on you. YOU must prove the images are fake.

Investigation processes last 6-12 months. Investigations appear on background checks as public records.

Attorney representation presents a forensic analysis immediately. This prevents lengthy investigation processes from destroying careers.

How to Respond to AI Deepfake Blackmail Threats

Response to AI deepfake blackmail requires distinguishing mass scam emails from targeted threats with specific employer or spouse information.

The decision framework is simple. Assess whether they have specific information about you.

The triage question determines action. "Do they have specific information about you?"

Red flags requiring immediate attorney contact include your name, employer, spouse name, or LinkedIn connections mentioned in threats.

Similar to identifying real sextortion emails, AI deepfake threats require triage between mass scams and targeted blackmail.

Mass AI Scam Emails vs. Targeted Deepfake Threats

Mass AI scam emails claim to have images but provide no proof. Targeted threats include actual AI-generated images with specific employer or family details.

Mass Scam (Ignore):

Generic threats with no images shown

"I have your photos" without proof

Sent to thousands simultaneously

No specific employer or spouse information

Demands Bitcoin payment

Grammar errors and generic language

Targeted Threat (Call Attorney):

AI-generated images attached or linked

Specific: "I'll send to [your boss name] at [company]"

Mentions spouse's name or LinkedIn connections

Demands $500-$5,000

Professional, detailed threats

Research on your background is evident

When to Call an Attorney Immediately

Call the attorney immediately if threats include AI-generated images, specific employer information, spouse names, or demands under $10,000.

Call when fake images are shown or linked. Call when employer or company names are mentioned. Call when spouse or family members are named.

Call when LinkedIn connections are referenced. Call when professional licenses are threatened. Call when demand amounts are specified in the $500-$10,000 range.

Call when multiple contact attempts occur. Call when the deadline pressure creates urgency.

(440) 581-2075

Frequently Asked Questions

Q: Are AI sextortion threats real or just scams?

AI sextortion includes both mass scams and real targeted threats. Mass emails claiming "I have your photos" without proof are scams sent to thousands. Targeted threats showing actual AI-generated images with specific employer or spouse information require immediate attorney response. Distinguishing between the two determines appropriate action.

Q: Can scammers really create fake explicit images from my LinkedIn photo?

Yes. Open-source AI tools transform innocent LinkedIn headshots into realistic fake explicit images within minutes. Scammers scrape professional photos from LinkedIn, company websites, and social media. Face-swapping algorithms create fabricated content. The technology is widely available, user-friendly, and requires no technical expertise.

Q: Is AI deepfake blackmail illegal if the images aren't real?

Yes. Federal law 18 U.S.C. § 875 and state extortion statutes prosecute blackmail based on the threat combined with a demand, not image authenticity. Extortion occurs when someone threatens to expose information—real or fabricated—to coerce payment. Image source is legally irrelevant to criminal charges.

AI deepfake sextortion targets professionals with LinkedIn profiles, corporate headshots, and career stakes through fabricated explicit images. Federal and state extortion laws prosecute blackmail regardless of image authenticity. Innocent professionals face immediate reputation damage requiring confidential attorney representation.

The Anti-Extortion Law Firm provides confidential legal protection for professionals targeted by AI deepfake blackmail.

(440) 581-2075 | Ohio Bar #101457 | 1818 Euclid Ave, Cleveland, OH 44115